SemanticPaint: A Framework for the Interactive Segmentation of 3D Scenes

PubDate: Oct 2015

Teams: University of Oxford;Microsoft;Nankai University;Stanford University;

Writers: Stuart Golodetz, Michael Sapienza, Julien P. C. Valentin, Vibhav Vineet, Ming-Ming Cheng, Anurag Arnab, Victor A. Prisacariu, Olaf Kähler, Carl Yuheng Ren, David W. Murray, Shahram Izadi, Philip H. S. Torr

PDF: SemanticPaint: A Framework for the Interactive Segmentation of 3D Scenes

Abstract

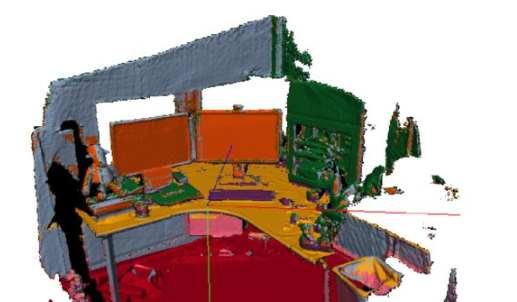

We present an open-source, real-time implementation of SemanticPaint, a system for geometric reconstruction, object-class segmentation and learning of 3D scenes. Using our system, a user can walk into a room wearing a depth camera and a virtual reality headset, and both densely reconstruct the 3D scene and interactively segment the environment into object classes such as ‘chair’, ‘floor’ and ‘table’. The user interacts physically with the real-world scene, touching objects and using voice commands to assign them appropriate labels. These user-generated labels are leveraged by an online random forest-based machine learning algorithm, which is used to predict labels for previously unseen parts of the scene. The entire pipeline runs in real time, and the user stays ‘in the loop’ throughout the process, receiving immediate feedback about the progress of the labelling and interacting with the scene as necessary to refine the predicted segmentation.