DPLM: A Deep Perceptual Spatial-Audio Localization Metric

PubDate: May 2021

Teams: Princeton University;Facebook Reality Labs Research

Writers: Pranay Manocha, Anurag Kumar, Buye Xu, Anjali Menon, Israel D. Gebru, Vamsi K. Ithapu, Paul Calamia

PDF: DPLM: A Deep Perceptual Spatial-Audio Localization Metric

Abstract

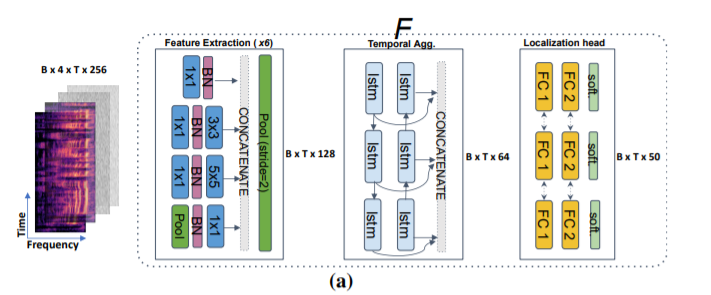

Subjective evaluations are critical for assessing the perceptual realism of sounds in audio-synthesis driven technologies like augmented and virtual reality. However, they are challenging to set up, fatiguing for users, and expensive. In this work, we tackle the problem of capturing the perceptual characteristics of localizing sounds. Specifically, we propose a framework for building a general purpose quality metric to assess spatial localization differences between two binaural recordings. We model localization similarity by utilizing activation-level distances from deep networks trained for direction of arrival (DOA) estimation. Our proposed metric (DPLM) outperforms baseline metrics on correlation with subjective ratings on a diverse set of datasets, even without the benefit of any human-labeled training data.