Differentiable Diffusion for Dense Depth Estimation from Multi-view Images

PubDate: Jun 2021

Teams: Brown University;KAIST

Writers: Numair Khan, Min H. Kim, James Tompkin

PDF: Differentiable Diffusion for Dense Depth Estimation from Multi-view Images

Abstract

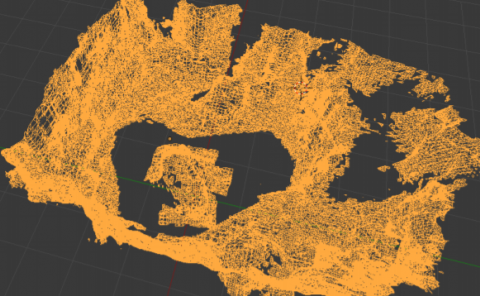

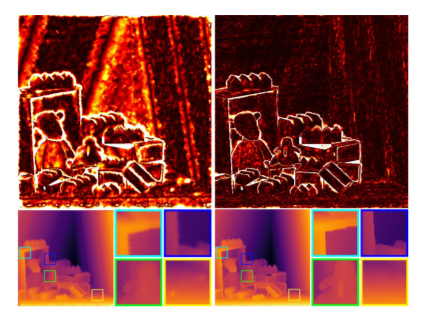

We present a method to estimate dense depth by optimizing a sparse set of points such that their diffusion into a depth map minimizes a multi-view reprojection error from RGB supervision. We optimize point positions, depths, and weights with respect to the loss by differential splatting that models points as Gaussians with analytic transmittance. Further, we develop an efficient optimization routine that can simultaneously optimize the 50k+ points required for complex scene reconstruction. We validate our routine using ground truth data and show high reconstruction quality. Then, we apply this to light field and wider baseline images via self supervision, and show improvements in both average and outlier error for depth maps diffused from inaccurate sparse points. Finally, we compare qualitative and quantitative results to image processing and deep learning methods.