Human Hair Inverse Rendering using Multi-View Photometric data

PubDate: June 29, 2021

Teams: University of California;Facebook Reality Lab

Writers: Tiancheng Sun, Giljoo Nam, Carlos Aliaga, Christophe Hery, Ravi Ramamoorthi

PDF: Human Hair Inverse Rendering using Multi-View Photometric data

Abstract

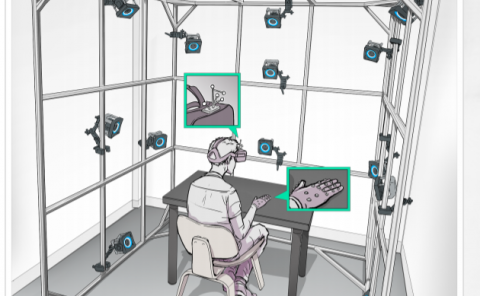

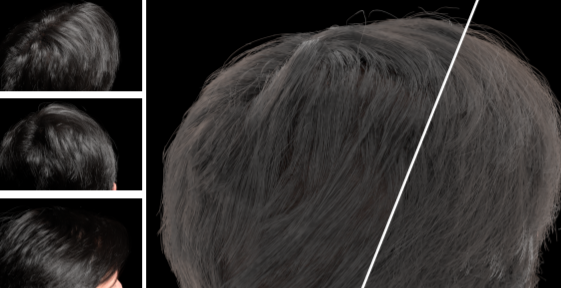

We introduce a hair inverse rendering framework to reconstruct high-fidelity 3D geometry of human hair, as well as its reflectance, which can be readily used for photorealistic rendering of hair. We take multi-view photometric data as input, i.e., a set of images taken from various viewpoints and different lighting conditions. Our method consists of two stages. First, we propose a novel solution for line-based multi-view stereo that yields accurate hair geometry from multi-view photometric data. Specifically, a per-pixel lightcode is proposed to efficiently solve the hair correspondence matching problem. Our new solution enables accurate and dense strand reconstruction from a smaller number of cameras compared to the state-of-the-art work. In the second stage, we estimate hair reflectance properties using multi-view photometric data. A simplified BSDF model of hair strands is used for realistic appearance reproduction. Based on the 3D geometry of hair strands, we fit the longitudinal roughness and find the single strand color. We show that our method can faithfully reproduce the appearance of human hair and provide realism for digital humans. We demonstrate the accuracy and efficiency of our method using photorealistic synthetic hair rendering data.