CoolMoves: User Motion Accentuation in Virtual Reality

PubDate: June 2021

Teams: Carnegie Mellon University;Microsoft Research;ETH Zürich

Writers: Karan Ahuja Eyal Ofek Mar Gonzalez Franco Christian Holz Andy Wilson

PDF: CoolMoves: User Motion Accentuation in Virtual Reality

Abstract

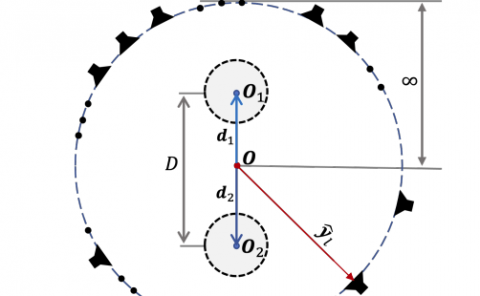

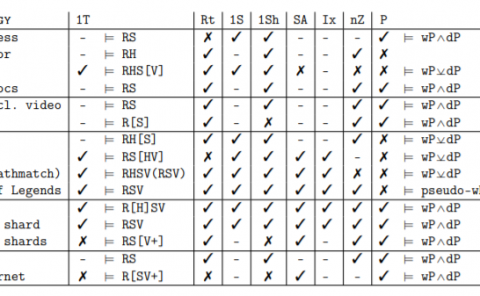

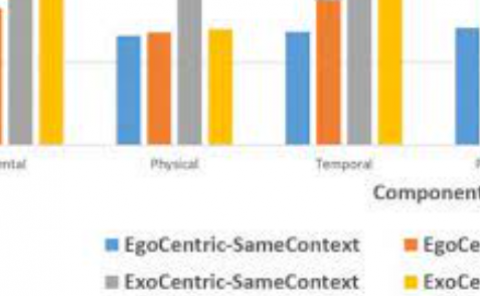

Current Virtual Reality (VR) systems are bereft of stylization and embellishment of the user’s motion – concepts that have been well explored in animations for games and movies. We present CoolMoves, a system for expressive and accentuated full-body motion synthesis of a user’s virtual avatar in real-time, from the limited input cues afforded by current consumer-grade VR systems, specifically headset and hand positions. We make use of existing motion capture databases as a template motion repository to draw from. We match similar spatio-temporal motions present in the database and then interpolate between them using a weighted distance metric. Joint prediction probability is then used to temporally smooth the synthesized motion, using human motion dynamics as a prior. This allows our system to work well even with very sparse motion databases (e.g., with only 3-5 motions per action). We validate our system with four experiments: a technical evaluation of our quantitative pose reconstruction and three additional user studies to evaluate the motion quality, embodiment and agency.