Integrating Deep Learning and Augmented Reality to Enhance Situational Awareness in Firefighting Environments

PubDate: Jul 2021

Teams: University of New Mexico

Writers: Manish Bhattarai

Abstract

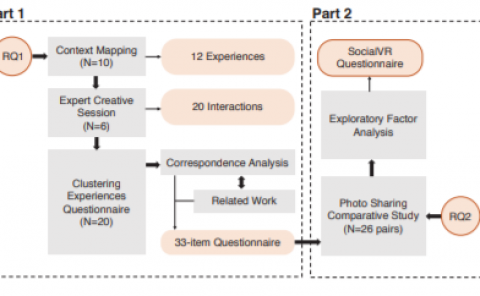

We present a new four-pronged approach to build firefighter’s situational awareness for the first time in the literature. We construct a series of deep learning frameworks built on top of one another to enhance the safety, efficiency, and successful completion of rescue missions conducted by firefighters in emergency first response settings. First, we used a deep Convolutional Neural Network (CNN) system to classify and identify objects of interest from thermal imagery in real-time. Next, we extended this CNN framework for object detection, tracking, segmentation with a Mask RCNN framework, and scene description with a multimodal natural language processing(NLP) framework. Third, we built a deep Q-learning-based agent, immune to stress-induced disorientation and anxiety, capable of making clear navigation decisions based on the observed and stored facts in live-fire environments. Finally, we used a low computational unsupervised learning technique called tensor decomposition to perform meaningful feature extraction for anomaly detection in real-time. With these ad-hoc deep learning structures, we built the artificial intelligence system’s backbone for firefighters’ situational awareness. To bring the designed system into usage by firefighters, we designed a physical structure where the processed results are used as inputs in the creation of an augmented reality capable of advising firefighters of their location and key features around them, which are vital to the rescue operation at hand, as well as a path planning feature that acts as a virtual guide to assist disoriented first responders in getting back to safety. When combined, these four approaches present a novel approach to information understanding, transfer, and synthesis that could dramatically improve firefighter response and efficacy and reduce life loss.