Learning-based Prediction, Rendering and Transmission for Interactive Virtual Reality in RIS-Assisted Terahertz Networks

PubDate: Jul 2021

Teams: Paris-Saclay University

Writers: Xiaonan Liu, Yansha Deng, Chong Han, Marco Di Renzo

Abstract

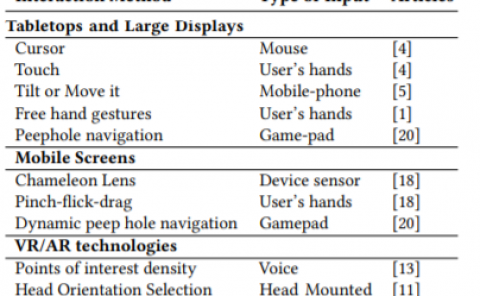

The quality of experience (QoE) requirements of wireless Virtual Reality (VR) can only be satisfied with high data rate, high reliability, and low VR interaction latency. This high data rate over short transmission distances may be achieved via abundant bandwidth in the terahertz (THz) band. However, THz waves suffer from severe signal attenuation, which may be compensated by the reconfigurable intelligent surface (RIS) technology with programmable reflecting elements. Meanwhile, the low VR interaction latency may be achieved with the mobile edge computing (MEC) network architecture due to its high computation capability. Motivated by these considerations, in this paper, we propose a MEC-enabled and RIS-assisted THz VR network in an indoor scenario, by taking into account the uplink viewpoint prediction and position transmission, MEC rendering, and downlink transmission. We propose two methods, which are referred to as centralized online Gated Recurrent Unit (GRU) and distributed Federated Averaging (FedAvg), to predict the viewpoints of VR users. In the uplink, an algorithm that integrates online Long-short Term Memory (LSTM) and Convolutional Neural Networks (CNN) is deployed to predict the locations and the line-of-sight and non-line-of-sight statuses of the VR users over time. In the downlink, we further develop a constrained deep reinforcement learning algorithm to select the optimal phase shifts of the RIS under latency constraints. Simulation results show that our proposed learning architecture achieves near-optimal QoE as that of the genie-aided benchmark algorithm, and about two times improvement in QoE compared to the random phase shift selection scheme.