A Benchmark of Four Methods for Generating 360° Saliency Maps from Eye Tracking Data

PubDate: January 2019

Teams: University of Florida

Writers: Brendan John; Pallavi Raiturkar; Olivier Le Meur; Eakta Jain

PDF: A Benchmark of Four Methods for Generating 360° Saliency Maps from Eye Tracking Data

Abstract

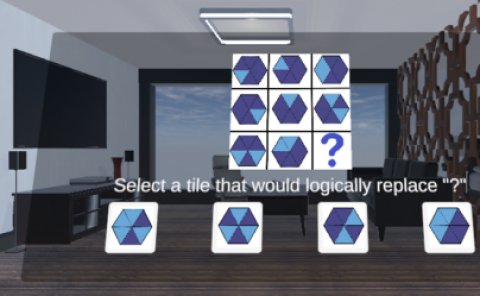

Modeling and visualization of user attention in Virtual Reality is important for many applications, such as gaze prediction, robotics, retargeting, video compression, and rendering. Several methods have been proposed to model eye tracking data as saliency maps. We benchmark the performance of four such methods for 360° images. We provide a comprehensive analysis and implementations of these methods to assist researchers and practitioners. Finally, we make recommendations based on our benchmark analyses and the ease of implementation.