Revisiting Distance Perception with Scaled Embodied Cues in Social Virtual Reality

PubDate: May 2021

Teams: University of Central Florida;University of Utah

Writers: Zubin Choudhary; Matthew Gottsacker; Kangsoo Kim; Ryan Schubert; Jeanine Stefanucci; Gerd Bruder; Gregory F. Welch

PDF: Revisiting Distance Perception with Scaled Embodied Cues in Social Virtual Reality

Abstract

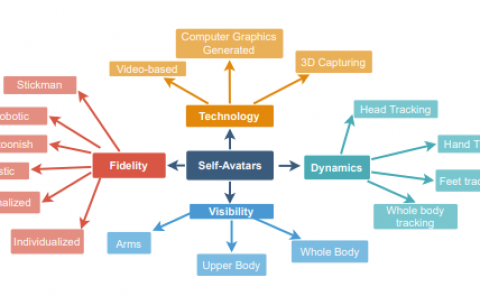

Previous research on distance estimation in virtual reality (VR) has well established that even for geometrically accurate virtual objects and environments users tend to systematically mis-estimate distances. This has implications for Social VR, where it introduces variables in personal space and proxemics behavior that change social behaviors compared to the real world. One yet unexplored factor is related to the trend that avatars’ embodied cues in Social VR are often scaled, e.g., by making one’s head bigger or one’s voice louder, to make social cues more pronounced over longer distances. In this paper we investigate how the perception of avatar distance is changed based on two means for scaling embodied social cues: visual head scale and verbal volume scale. We conducted a human-subject study employing a mixed factorial design with two Social VR avatar representations (full-body, head-only) as a between factor as well as three visual head scales and three verbal volume scales (up-scaled, accurate, down-scaled) as within factors. For three distances from social to far-public space, we found that visual head scale had a significant effect on distance judgments and should be tuned for Social VR, while conflicting verbal volume scales did not, indicating that voices can be scaled in Social VR without immediate repercussions on spatial estimates. We discuss the interactions between the factors and implications for Social VR.