Ego4D: Around the World in 3,000 Hours of Egocentric Video

PubDate: Oct 2021

Teams: 1Facebook AI Research (FAIR), 2University of Texas at Austin, 3University of Minnesota, 4University of Catania,5Facebook Reality Labs, 6Georgia Tech, 7Carnegie Mellon University, 8UC Berkeley, 9University of Bristol,10King Abdullah University of Science and Technology, 11National University of Singapore,12Carnegie Mellon University Africa, 13Facebook, 14Universidad de los Andes, 15University of Tokyo, 16Indiana University,17International Institute of Information Technology, Hyderabad, 18MIT, 19University of Pennsylvania, 20Dartmouth

Writers: Kristen Grauman1,2, Andrew Westbury1, Eugene Byrne∗1, Zachary Chavis∗3, Antonino Furnari∗4,Rohit Girdhar∗1, Jackson Hamburger∗1, Hao Jiang∗5, Miao Liu∗6, Xingyu Liu∗7, Miguel Martin∗1,Tushar Nagarajan∗1,2, Ilija Radosavovic∗8,Santhosh Kumar Ramakrishnan∗1,2, Fiona Ryan∗6,Jayant Sharma∗3Michael Wray∗9, Mengmeng Xu∗10, Eric Zhongcong Xu∗11, Chen Zhao∗10,Siddhant Bansal17, Dhruv Batra1, Vincent Cartillier1,6, Sean Crane7, Tien Do3, Morrie Doulaty13,Akshay Erapalli13, Christoph Feichtenhofer1, Adriano Fragomeni9, Qichen Fu7, Christian Fuegen13,Abrham Gebreselasie12, Cristina Gonzalez ´14, James Hillis5, Xuhua Huang7, Yifei Huang15,Wenqi Jia6, Weslie Khoo16, Jachym Kolar13, Satwik Kottur13, Anurag Kumar5, Federico Landini13,Chao Li5, Yanghao Li1, Zhenqiang Li15, Karttikeya Mangalam1,8, Raghava Modhugu17,Jonathan Munro9, Tullie Murrell1, Takumi Nishiyasu15, Will Price9, Paola Ruiz Puentes14,Merey Ramazanova10, Leda Sari5, Kiran Somasundaram5, Audrey Southerland6, Yusuke Sugano15,Ruijie Tao11, Minh Vo5, Yuchen Wang16, Xindi Wu7, Takuma Yagi15, Yunyi Zhu11,Pablo Arbelaez ´†14, David Crandall†16, Dima Damen†9, Giovanni Maria Farinella†4,Bernard Ghanem†10, Vamsi Krishna Ithapu†5, C. V. Jawahar†17, Hanbyul Joo†1, Kris Kitani†7,Haizhou Li†11, Richard Newcombe†5, Aude Oliva†18, Hyun Soo Park†3, James M. Rehg†6,Yoichi Sato†15, Jianbo Shi†19, Mike Zheng Shou†11, Antonio Torralba†18,Lorenzo Torresani†1,20, Mingfei Yan†5, Jitendra Malik1,8

PDF: Ego4D: Around the World in 3,000 Hours of Egocentric Video

Project: Ego4D: Around the World in 3,000 Hours of Egocentric Video

Abstract

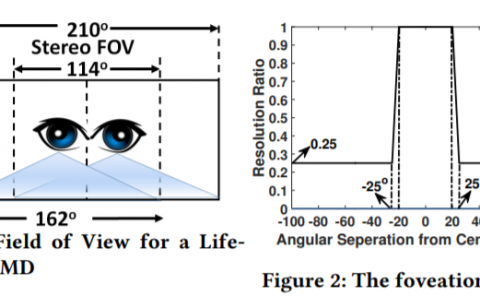

We introduce Ego4D, a massive-scale egocentric video dataset and benchmark suite. It offers 3,025 hours of dailylife activity video spanning hundreds of scenarios (household, outdoor, workplace, leisure, etc.) captured by 855 unique camera wearers from 74 worldwide locations and 9 different countries. The approach to collection is designed to uphold rigorous privacy and ethics standards with consenting participants and robust de-identification procedures where relevant. Ego4D dramatically expands the volume of diverse egocentric video footage publicly available to the research community. Portions of the video are accompanied by audio, 3D meshes of the environment, eye gaze, stereo, and/or synchronized videos from multiple egocentric cameras at the same event. Furthermore, we present a host of new benchmark challenges centered around understanding the first-person visual experience in the past (querying an episodic memory), present (analyzing hand-object manipulation, audio-visual conversation, and social interactions), and future (forecasting activities). By publicly sharing this massive annotated dataset and benchmark suite, we aim to push the frontier of first-person perception.