Neural Synthesis of Binaural Speech from Mono Audio

PubDate: May 4, 2021

Teams: Facebook Reality Labs

Writers: Alexander Richard, Dejan Markovic, Israel D. Gebru, Steven Krenn, Gladstone Butler, Fernando De la Torre, Yaser Sheikh

PDF: Neural Synthesis of Binaural Speech from Mono Audio

Abstract

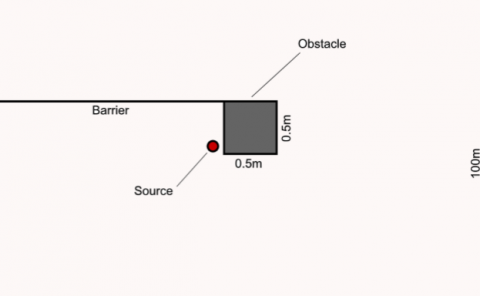

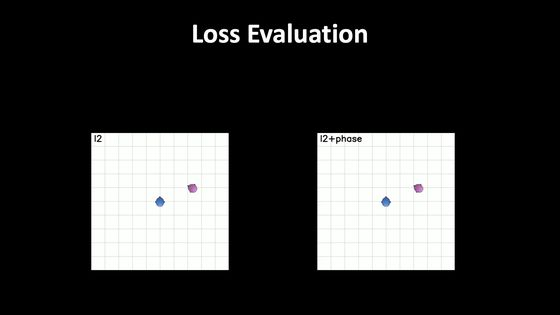

We present a neural rendering approach for binaural sound synthesis that can produce realistic and spatially accurate binaural sound in realtime. The network takes, as input, a single-channel audio source and synthesizes, as output, two-channel binaural sound, conditioned on the relative position and orientation of the listener with respect to the source. We investigate deficiencies of the ℓ2-loss on raw wave-forms in a theoretical analysis and introduce an improved loss that overcomes these limitations. In an empirical evaluation, we establish that our approach is the first to generate spatially accurate waveform outputs (as measured by real recordings) and outperforms existing approaches by a considerable margin, both quantitatively and in a perceptual study. Dataset and code are available online: https://github.com/facebookresearch/BinauralSpeechSynthesis.