VR Viewport Pose Model for Quantifying and Exploiting Frame Correlations

PubDate: Jan 2022

Teams: Duke University;Macquarie University

Writers: Ying Chen, Hojung Kwon, Hazer Inaltekin, Maria Gorlatova

PDF: VR Viewport Pose Model for Quantifying and Exploiting Frame Correlations

Abstract

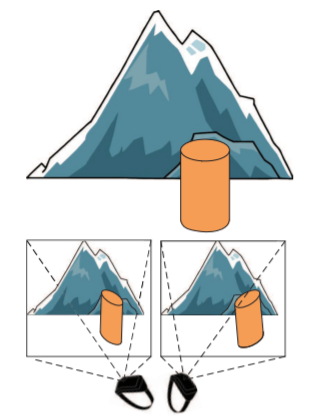

The importance of the dynamics of the viewport pose, i.e., location and orientation of users’ points of view, for virtual reality (VR) experiences calls for the development of VR viewport pose models. In this paper, informed by our experimental measurements of viewport trajectories in 3 VR games and across 3 different types of VR interfaces, we first develop a statistical model of viewport poses in VR environments. Based on the developed model, we examine the correlations between pixels in VR frames that correspond to different viewport poses, and obtain an analytical expression for the visibility similarity (ViS) of the pixels across different VR frames. We then propose a lightweight ViS-based ALG-ViS algorithm that adaptively splits VR frames into background and foreground, reusing the background across different frames. Our implementation of ALG-ViS in two Oculus Quest 2 rendering systems demonstrates ALG-ViS running in real time, supporting the full VR frame rate, and outperforming baselines on measures of frame quality and bandwidth consumption.