Perception-Driven Hybrid Foveated Depth of Field Rendering for Head-Mounted Displays

PubDate: November 2021

Teams: Technical University of Denmark

Writers: Jingyu Liu; Claire Mantel; Søren Forchhammer

PDF: Perception-Driven Hybrid Foveated Depth of Field Rendering for Head-Mounted Displays

Abstract

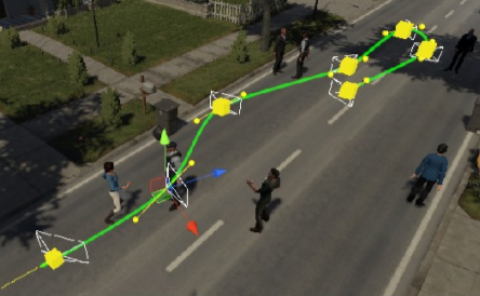

In this paper, we present a novel perception-driven hybrid rendering method leveraging the limitation of the human visual system (HVS). Features accounted in our model include: foveation from the visual acuity eccentricity (VAE), depth of field (DOF) from vergence & accommodation, and longitudinal chromatic aberration (LCA) from color vision. To allocate computational workload efficiently, first we apply a gaze-contingent geometry simplification. Then we convert the coordinates from screen space to polar space with a scaling strategy coherent with VAE. Upon that, we apply a stochastic sampling based on DOF. Finally, we post-process the Bokeh for DOF, which can at the same time achieve LCA and anti-aliasing. A virtual reality (VR) experiment on 6 Unity scenes with a head-mounted display (HMD) HTC VIVE Pro Eye yields frame rates range from 25.2 to 48.7 fps. Objective evaluation with FovVideoVDP - a perceptual based visible difference metric - suggests that the proposed method gives satisfactory just-objectionable-difference (JOD) scores across 6 scenes from 7.61 to 8.69 (in a 10 unit scheme). Our method achieves better performance compared with the existing methods while having the same or better level of quality score