Benchmarking Monocular Depth Estimation Models for VR Content Creation from a User Perspective

PubDate: December 2021

Teams: University of Otago

Writers: Anthony Dickson; Alistair Knott; Stefanie Zollmann

PDF: Benchmarking Monocular Depth Estimation Models for VR Content Creation from a User Perspective

Abstract

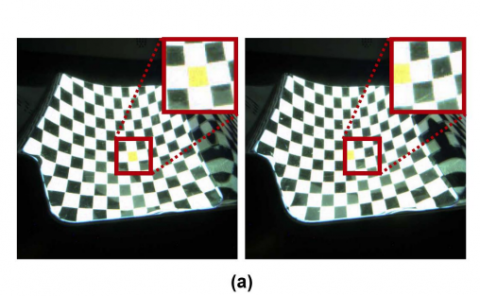

Exploring monocular images and videos in 3D in Virtual Reality (VR) requires reliable methods for estimating depth as this has not been captured in the original footage. There are monocular depth estimation methods that address this requirement by using neural network models to predict depth values for each pixel. However, as this is a quickly moving field and the state-of-the-art is constantly evolving, how do we choose the best depth estimation model for 3D content creation for VR? It can be difficult to interpret the widely used benchmark metrics and how they relate to the quality of the created 3D/VR content.In this paper, we explore a user-centred approach to evaluating depth estimation models with regard to 3D content creation. We look at evaluating these models based on content created with these models that an end-user might see, rather than just their immediate output (depth maps) that does not directly contribute to users’ perceptual experience. In particular, we investigate the relationship between commonly used depth estimation metrics, image similarity metrics applied to synthesised novel viewpoints, and user perception of quality and similarity of these novel view-points. Our results suggest that the standard depth estimation metrics are indeed good indicators of 3D quality, and that they correspond well with human judgements and image similarity metrics on novel viewpoints synthesised from a range of sources of depth data. We also show that users rate a state-of-the-art depth estimation model as almost visually indistinguishable from the outputs derived from ground truth sensor data.