Talking With Hands 16.2M: A Large-Scale Dataset of Synchronized Body-Finger Motion and Audio for Conversational Motion Analysis and Synthesis

Teams: Facebook

Writers: Gilwoo Lee, Zhiwei Deng, Shugao Ma, Takaaki Shiratori, Siddhartha S. Srinivasa, Yaser Sheikh

Publication date: October 29, 2019

Abstract

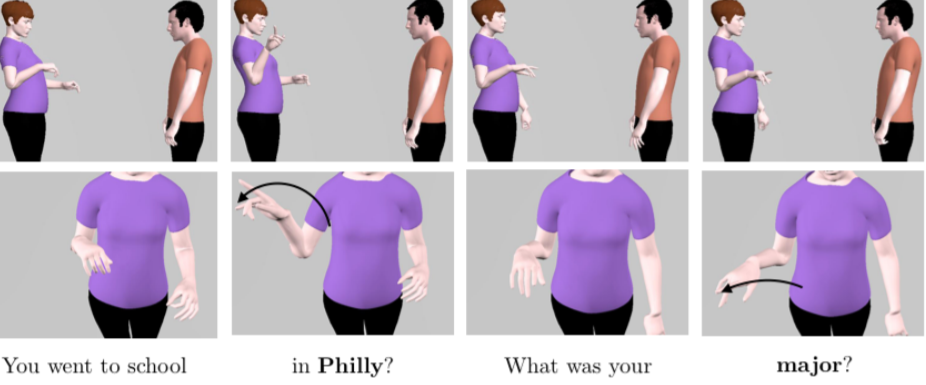

We present a 16.2 million frame (50 hour) multimodal dataset of two-person face-to-face spontaneous conversations. Our dataset features synchronized body and finger motion as well as audio data. To the best of our knowledge, it represents the largest motion capture and audio dataset of natural conversations to date. The statistical analysis verifies strong intraperson and interperson covariance of arm, hand, and speech features, potentially enabling new directions on data-driven social behavior analysis, prediction, and synthesis. As an illustration, we propose a novel real-time finger motion synthesis method: a temporal neural network innovatively trained with an inverse kinematics (IK) loss, which adds skeletal structural information to the generative model. Our qualitative user study shows that the finger motion generated by our method is perceived as natural and conversation enhancing, while the quantitative ablation study demonstrates the effectiveness of IK loss.