Synthetic-to-Real Domain Adaptation Joint Spatial Feature Transform for Stereo Matching

PubDate: November 2021

Teams: Northwestern Polytechnical University;Content Production Center of Virtual Realit

Writers: Xing Li; Yangyu Fan; Zhibo Rao; Guoyun Lv; Shiya Liu

PDF: Synthetic-to-Real Domain Adaptation Joint Spatial Feature Transform for Stereo Matching

Abstract

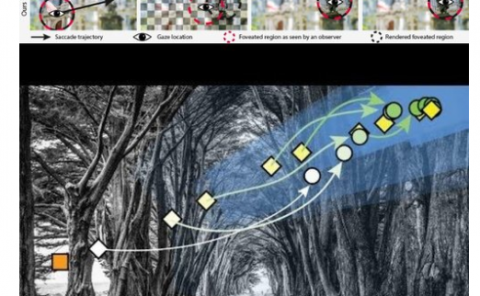

Most deep learning-based state-of-the-art stereo matching methods significantly depend on large-scale datasets. However, it is implausible to collect sufficient real-world samples with dense and clear ground-truth disparity maps in practice. Although synthetic datasets’ appearance has alleviated the demand for extensive real data, there is a domain shift between synthetic and real sets. To tackle this problem, we propose an individually trained synthetic-to-real domain adaptation (SDA) network that maps synthetic images into the real domain. Specifically, our approach translates the data style from synthetic domain to real domain while maintaining the content and the spatial information. First, edge cues are leveraged to guide domain adaptation in preserving the spatial consistency between input and the generated image. Second, we combine the spatial feature transform (SFT) layer to effectively fuse features from the edge map and the source image. Extensive experiments demonstrate that: 1) when only trained on synthetic data and generalized to real data, our model evidently outperforms many state-of-the-art domain adaptation methods; 2) our translated synthetic datasets (TSD) help to improve the generalization capability of any stereo matching CNNs. Codes and data will be available at https://github.com/Archaic-Atom/SDA_network .