Eliciting Multimodal Gesture+Speech Interactions in a Multi-Object Augmented Reality Environment

PubDate: Jul 2022

Teams: Colorado State University

Writers: Xiaoyan Zhou, Adam S. Williams, Francisco R. Ortega

PDF: Eliciting Multimodal Gesture+Speech Interactions in a Multi-Object Augmented Reality Environment

Abstract

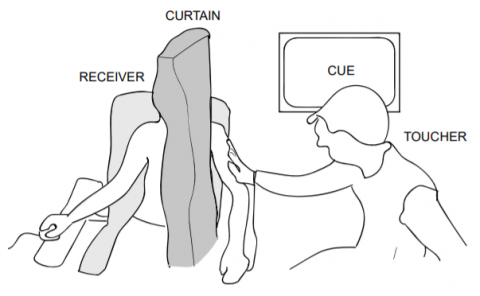

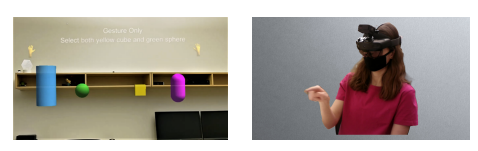

As augmented reality technology and hardware become more mature and affordable, researchers have been exploring more intuitive and discoverable interaction techniques for immersive environments. In this paper, we investigate multimodal interaction for 3D object manipulation in a multi-object virtual environment. To identify the user-defined gestures, we conducted an elicitation study involving 24 participants for 22 referents with an augmented reality headset. It yielded 528 proposals and generated a winning gesture set with 25 gestures after binning and ranking all gesture proposals. We found that for the same task, the same gesture was preferred for both one and two object manipulation, although both hands were used in the two object scenario. We presented the gestures and speech results, and the differences compared to similar studies in a single object virtual environment. The study also explored the association between speech expressions and gesture stroke during object manipulation, which could improve the recognizer efficiency in augmented reality headsets.