Virtual Keyboards With Real-Time and Robust Deep Learning-Based Gesture Recognition

PubDate: April 2022

Teams: Seoul National University;University of Washington;Sun Moon University

Writers: Tae-Ho Lee; Sunwoong Kim; Taehyun Kim; Jin-Sung Kim; Hyuk-Jae Lee

PDF: Virtual Keyboards With Real-Time and Robust Deep Learning-Based Gesture Recognition

Abstract

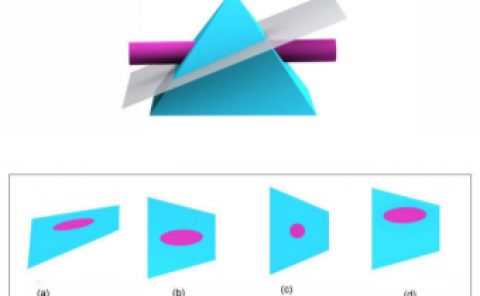

In head-mounted display devices for augmented reality and virtual reality, external signals are often entered using a virtual keyboard (VKB). Among various user interfaces for VKBs, hand gestures are widely used because they are fast and intuitive. This work proposes a gesture-recognition (GR)-based VKB algorithm that is accurate in any environment and operates in real time. Specifically, the proposed ambidextrous VKB layouts reduce the total finger travel distance on one-hand VKB layouts. Additionally, a fast typing action is proposed to use characteristics when previous and current keys are adjacent. To be robust in any environment, we utilize a deep learning (DL)-based GR method in the proposed VKB algorithm. To train DL networks, seven classes are defined and an automated dataset generation method is proposed to reduce the necessary time and effort. The proposed one-hand VKB layout with the fast typing action shows a 1.5× faster typing speed than the popular ABC keyboard layout. Furthermore, the proposed ambidextrous VKB layout brings an additional 52% improvement compared with the proposed one-hand VKB layout. The proposed DL-based GR method implemented on the well-known YOLOv3 machine learning framework shows a mean average precision rate of 95% for images including background colors similar to skin color. The proposed DL-based GR method for one-hand and ambidextrous VKBs achieves around 41 frames per second on a software platform, which allows real-time processing.