SFNet: Clothed Human 3D Reconstruction via Single Side-to-Front View RGB-D Image

PubDate: Aug 2022

Teams: Northwestern Polytechnical University;Shadow Creator Inc;Content Production Center of Virtual Reality

Writers: Xing Li; Yangyu Fan; Di Xu; Wenqing He; Guoyun Lv; Shiya Liu

PDF:SFNet: Clothed Human 3D Reconstruction via Single Side-to-Front View RGB-D Image

Abstract

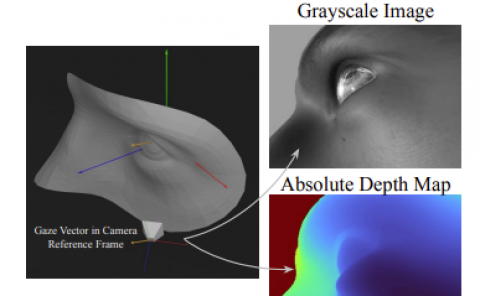

Front-view human information is critical for reconstructing a detailed 3D human body from a single RGB/RGB-D image. However, we sometimes struggle to access the front-view portrait in practice. Thus, in this work, we propose a bidirectional network (SFNet), one branch to transform side-view RGB image to front-view and another to transform side-view depth image to front-view. Since normal maps typically encode more 3D surface detail information than depth maps, we leverage an adversarial learning framework conditioned on normal maps to improve the performance of predicting front-view depth. Our method is end-to-end trainable, resulting in high fidelity front-view RGB-D estimation and 3D reconstruction.