Multi-Camera Lighting Estimation for Photorealistic Front-Facing Mobile Augmented Reality

PubDate: Jan 2023

Teams: Worcester Polytechnic Institute;Google

Writers: Yiqin Zhao, Sean Fanello, Tian Guo

PDF: Multi-Camera Lighting Estimation for Photorealistic Front-Facing Mobile Augmented Reality

Abstract

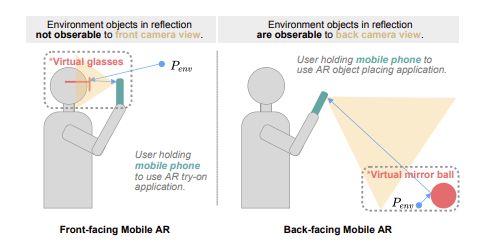

Lighting understanding plays an important role in virtual object composition, including mobile augmented reality (AR) applications. Prior work often targets recovering lighting from the physical environment to support photorealistic AR rendering. Because the common workflow is to use a back-facing camera to capture the physical world for overlaying virtual objects, we refer to this usage pattern as back-facing AR. However, existing methods often fall short in supporting emerging front-facing mobile AR applications, e.g., virtual try-on where a user leverages a front-facing camera to explore the effect of various products (e.g., glasses or hats) of different styles. This lack of support can be attributed to the unique challenges of obtaining 360