Few-shot 3D Shape Generation

PubDate: May 2023

Teams: Tsinghua University;University of Science and Technology Beijing

Writers: Jingyuan Zhu, Huimin Ma, Jiansheng Chen, Jian Yuan

PDF: Few-shot 3D Shape Generation

Abstract

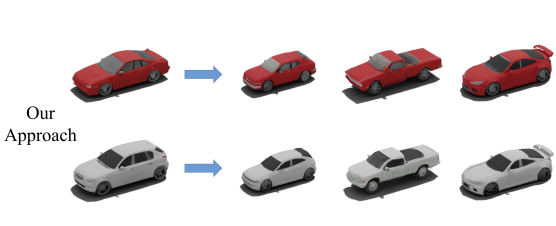

Realistic and diverse 3D shape generation is helpful for a wide variety of applications such as virtual reality, gaming, and animation. Modern generative models, such as GANs and diffusion models, learn from large-scale datasets and generate new samples following similar data distributions. However, when training data is limited, deep neural generative networks overfit and tend to replicate training samples. Prior works focus on few-shot image generation to produce high-quality and diverse results using a few target images. Unfortunately, abundant 3D shape data is typically hard to obtain as well. In this work, we make the first attempt to realize few-shot 3D shape generation by adapting generative models pre-trained on large source domains to target domains using limited data. To relieve overfitting and keep considerable diversity, we propose to maintain the probability distributions of the pairwise relative distances between adapted samples at feature-level and shape-level during domain adaptation. Our approach only needs the silhouettes of few-shot target samples as training data to learn target geometry distributions and achieve generated shapes with diverse topology and textures. Moreover, we introduce several metrics to evaluate the quality and diversity of few-shot 3D shape generation. The effectiveness of our approach is demonstrated qualitatively and quantitatively under a series of few-shot 3D shape adaptation setups.