Exploring Human Response Times to Combinations of Audio, Haptic, and Visual Stimuli from a Mobile Device

PubDate: May 2023

Teams: Stanford University;Cornell University

Writers: Kyle T. Yoshida, Joel X. Kiernan, Allison M. Okamura, Cara M. Nunez

Abstract

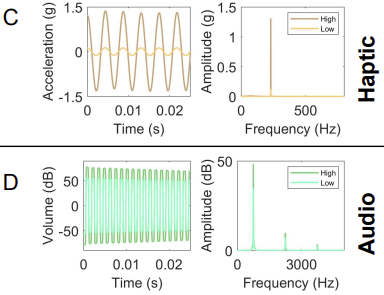

Auditory, haptic, and visual stimuli provide alerts, notifications, and information for a wide variety of applications ranging from virtual reality to wearable and hand-held devices. Response times to these stimuli have been used to assess motor control and design human-computer interaction systems. In this study, we investigate human response times to 26 combinations of auditory, haptic, and visual stimuli at three levels (high, low, and off). We developed an iOS app that presents these stimuli in random intervals and records response times on an iPhone 11. We conducted a user study with 20 participants and found that response time decreased with more types and higher levels of stimuli. The low visual condition had the slowest mean response time (mean +/- standard deviation, 528 +/- 105 ms) and the condition with high levels of audio, haptic, and visual stimuli had the fastest mean response time (320 +/- 43 ms). This work quantifies response times to multi-modal stimuli, identifies interactions between different stimuli types and levels, and introduces an app-based method that can be widely distributed to measure response time. Understanding preferences and response times for stimuli can provide insight into designing devices for human-machine interaction.