Motion Matching for Character Animation and Virtual Reality Avatars in Unity

PubDate: Oct 2023

Teams: Jose Luis Ponton

Writers: Jose Luis Ponton

PDF: Motion Matching for Character Animation and Virtual Reality Avatars in Unity

Abstract

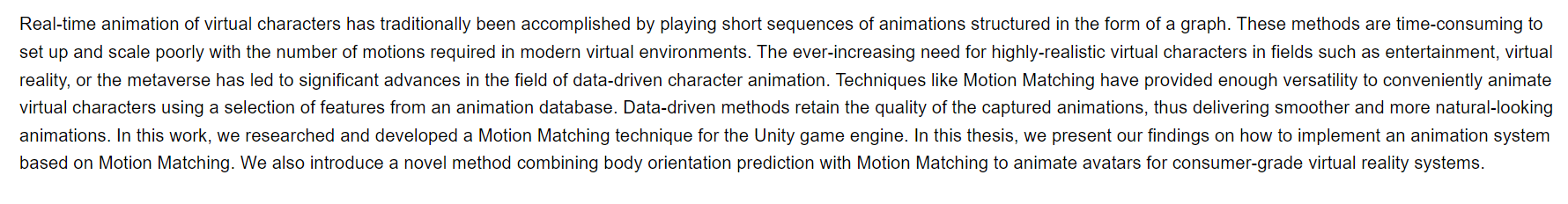

Real-time animation of virtual characters has traditionally been accomplished by playing short sequences of animations structured in the form of a graph. These methods are time-consuming to set up and scale poorly with the number of motions required in modern virtual environments. The ever-increasing need for highly-realistic virtual characters in fields such as entertainment, virtual reality, or the metaverse has led to significant advances in the field of data-driven character animation. Techniques like Motion Matching have provided enough versatility to conveniently animate virtual characters using a selection of features from an animation database. Data-driven methods retain the quality of the captured animations, thus delivering smoother and more natural-looking animations. In this work, we researched and developed a Motion Matching technique for the Unity game engine. In this thesis, we present our findings on how to implement an animation system based on Motion Matching. We also introduce a novel method combining body orientation prediction with Motion Matching to animate avatars for consumer-grade virtual reality systems.