Challenges and Design Considerations for Multimodal Asynchronous Collaboration in VR

PubDate: November 2019

Teams: University of British Columbia,Adobe Research

Writers: Kevin Chow;Caitlin Coyiuto;Cuong Nguyen;Dongwook Yoon

PDF: Challenges and Design Considerations for Multimodal Asynchronous Collaboration in VR

Abstract

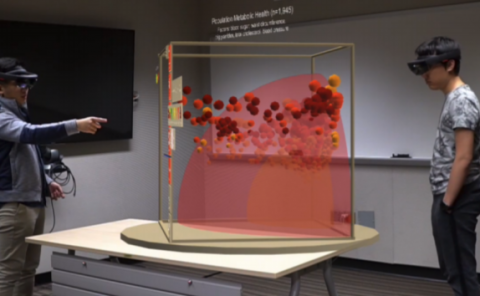

Studies on collaborative virtual environments (CVEs) have suggested capture and later replay of multimodal interactions (e.g., speech, body language, and scene manipulations), which we refer to as multimodal recordings, as an effective medium for time-distributed collaborators to discuss and review 3D content in an immersive, expressive, and asynchronous way. However, there exist gaps of empirical knowledge in understanding how this multimodal asynchronous VR collaboration (MAVRC) context impacts social behaviors in mediated-communication, workspace awareness in cooperative work, and user requirements for authoring and consuming multimedia recording. This study aims to address these gaps by conceptualizing MAVRC as a type of CSCW and by understanding the challenges and design considerations of MAVRC systems. To this end, we conducted an exploratory need-finding study where participants (N = 15) used an experimental MAVRC system to complete a representative spatial task in an asynchronously collaborative setting, involving both consumption and production of multimodal recordings. Qualitative analysis of interview and observation data from the study revealed unique, core design challenges of MAVRC in: (1) coordinating proxemic behaviors between asynchronous collaborators, (2) providing traceability and change awareness across different versions of 3D scenes, (3) accommodating viewpoint control to maintain workspace awareness, and (4) supporting navigation and editing of multimodal recordings. We discuss design implications, ideate on potential design solutions, and conclude the paper with a set of design recommendations for MAVRC systems.