Gesture2Text: A Generalizable Decoder for Word-Gesture Keyboards in XR Through Trajectory Coarse Discretization and Pre-training

PubDate: Otc 2024

Teams:Meta,University of Bristol

Writers:Junxiao Shen, Khadija Khaldi, Enmin Zhou, Hemant Bhaskar Surale, Amy Karlson

Abstract

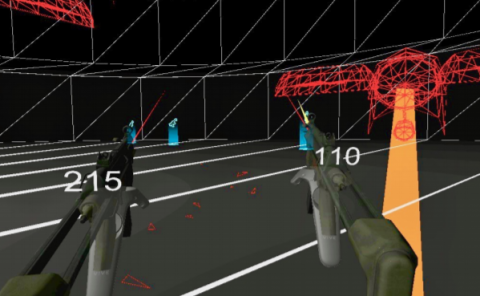

Text entry with word-gesture keyboards (WGK) is emerging as a popular method and becoming a key interaction for Extended Reality (XR). However, the diversity of interaction modes, keyboard sizes, and visual feedback in these environments introduces divergent word-gesture trajectory data patterns, thus leading to complexity in decoding trajectories into text. Template-matching decoding methods, such as SHARK

2, are commonly used for these WGK systems because they are easy to implement and configure. However, these methods are susceptible to decoding inaccuracies for noisy trajectories. While conventional neural-network-based decoders (neural decoders) trained on word-gesture trajectory data have been proposed to improve accuracy, they have their own limitations: they require extensive data for training and deep-learning expertise for implementation. To address these challenges, we propose a novel solution that combines ease of implementation with high decoding accuracy: a generalizable neural decoder enabled by pre-training on large-scale coarsely discretized word-gesture trajectories. This approach produces a ready-to-use WGK decoder that is generalizable across mid-air and on-surface WGK systems in augmented reality (AR) and virtual reality (VR), which is evident by a robust average Top-4 accuracy of 90.4% on four diverse datasets. It significantly outperforms SHARK

2 with a 37.2% enhancement and surpasses the conventional neural decoder by 7.4%. Moreover, the Pre-trained Neural Decoder's size is only 4 MB after quantization, without sacrificing accuracy, and it can operate in real-time, executing in just 97 milliseconds on Quest 3.