Deep reinforcement learning-driven intelligent panoramic video bitrate adaptation

PubDate: May 2019

Teams: Sun Yat-sen University

Writers: Gongwei Xiao;Xu Chen;Muhong Wu;Zhi Zhou

PDF: Deep reinforcement learning-driven intelligent panoramic video bitrate adaptation

Abstract

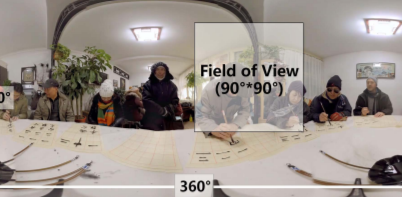

Online panoramic video has recently gained enormous popularity. Tile-based adaptive streaming is a promising method to deliver a panoramic video for the sake of bandwidth saving. However, it’s challenging to estimate the user’s field of view (FoV) and deliver the optimal bitrate due to the dynamic user behavior and time-varying network. In this paper, we propose a novel approach to delivering panoramic video. Specifically, a long short-term memory (LSTM) model is used to estimate the FoV in the next few seconds. Our quality adaptation policy is based on a deep reinforcement learning (DRL) agent, which is able to intelligently adapt its bitrate selection policy to different environments. We have implemented a prototype of this system, which outperforms other existing panoramic video streaming frameworks by 12% in quality of experience (QoE) after getting converged in a wide range of environment metrics, and achieves the best performance.