Investigating different modalities of directional cues for multi-task visual-searching scenario in virtual reality

PubDate: November 2018

Teams: City University of Hong Kong

Writers: Taizhou Chen;Yi-Shiun Wu;Kening Zhu

Abstract

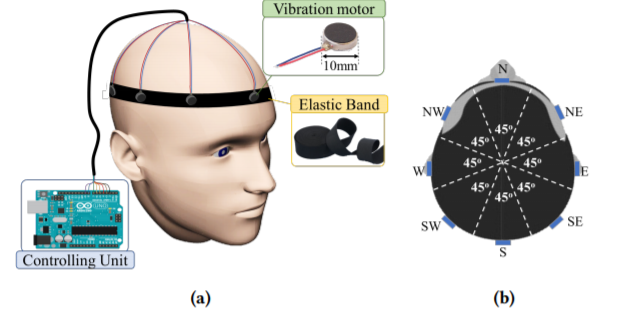

In this study, we investigated and compared the effectiveness of visual, auditory, and vibrotactile directional cues on multiple simultaneous visual-searching tasks in an immersive virtual environment. Effectiveness was determined by the task-completion time, the range of head movement, the accuracy of the identification task, and the perceived workload. Our experiment showed that the on-head vibrotactile display can effectively guide users towards virtual visual targets, without affecting their performance on the other simultaneous tasks, in the immersive VR environment. These results can be applied to numerous applications (e.g. gaming, driving, and piloting) in which there are usually multiple simultaneous tasks, and the user experience and performance could be vulnerable.