Depth discrimination between augmented reality and real-world targets for vehicle head-up displays

PubDate: September 2015

Teams: University of Nottingham,Jaguar Land Rover

Writers: Mike Long;Gary Burnett;Robert Hardy;Harriet Allen

PDF: Depth discrimination between augmented reality and real-world targets for vehicle head-up displays

Abstract

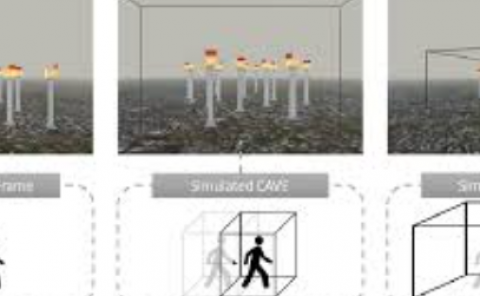

Augmented reality (AR) adds virtual graphics, sounds or data to a real-world environment. Future Head-Up Displays in vehicles will enable AR images to be presented at varying depths, potentially enabling additional cues to be provided to drivers to facilitate task performance. In order to correctly position such AR imagery, it is necessary to know at what point the virtual image is discriminable in depth from a real-world object. In a two-alternative forced-choice psychophysical depth judgment task, 40 observers judged if an AR image (a green diamond) appeared in front or behind a static ‘pedestrian’ target. Depth thresholds for the AR image were tested with the pedestrian target at 5m, 10m, 20m and 25m and at six locations relative to the pedestrian. The AR image was presented at different heights in the visual field, (above, middle and below the real-world target) and across the horizontal plane (left, middle, right of the real-world target). Participants were more likely to report that the AR image was presented in front of the target rather than behind. Inconsistent with previous findings, no overall effects of height or horizontal position were found. Depth thresholds scaled with distance, with larger thresholds at further distances. Findings also showed large individual differences and slow response times (above 2.5s average), suggesting of difficulties judging AR in depth. Recommendations are made regarding where a HUD image should be located in depth if a designer wishes users to reliably perceive the image to be in front/alongside or behind a real-world object.