Alignment of the Virtual Scene to the Tracking Space of a Mixed Reality Head-Mounted Display

PubDate: Mar 2019

Teams: h Johns Hopkins University;

Writers: Ehsan Azimi, Long Qian, Nassir Navab, Peter Kazanzides

PDF: Alignment of the Virtual Scene to the Tracking Space of a Mixed Reality Head-Mounted Display

Abstract

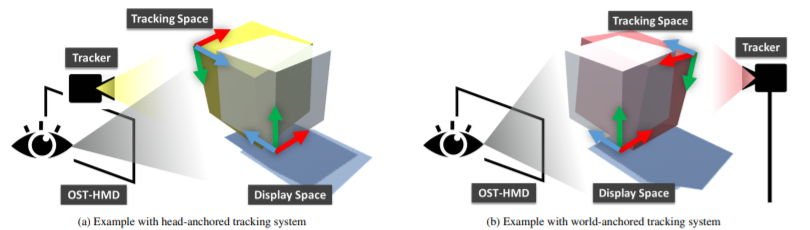

With the mounting global interest for optical see-through head-mounted displays (OST-HMDs) across medical, industrial and entertainment settings, many systems with different capabilities are rapidly entering the market. Despite such variety, they all require display calibration to create a proper mixed reality environment. With the aid of tracking systems, it is possible to register rendered graphics with tracked objects in the real world. We propose a calibration procedure to properly align the coordinate system of a 3D virtual scene that the user sees with that of the tracker. Our method takes a blackbox approach towards the HMD calibration, where the tracker’s data is its input and the 3D coordinates of a virtual object in the observer’s eye is the output; the objective is thus to find the 3D projection that aligns the virtual content with its real counterpart. In addition, a faster and more intuitive version of this calibration is introduced in which the user simultaneously aligns multiple points of a single virtual 3D object with its real counterpart; this reduces the number of required repetitions in the alignment from 20 to only 4, which leads to a much easier calibration task for the user. In this paper, both internal (HMD camera) and external tracking systems are studied. We perform experiments with Microsoft HoloLens, taking advantage of its self localization and spatial mapping capabilities to eliminate the requirement for line of sight from the HMD to the object or external tracker. The experimental results indicate an accuracy of up to 4 mm in the average reprojection error based on two separate evaluation methods. We further perform experiments with the internal tracking on the Epson Moverio BT-300 to demonstrate that the method can provide similar results with other HMDs.