Occlusion Handling using Semantic Segmentation and Visibility-Based Rendering for Mixed Reality

PubDate: Jul 2017

Teams: NTT Communication Science Laboratories;The University of Tokyo

Writers: Menandro Roxas, Tomoki Hori, Taiki Fukiage, Yasuhide Okamoto, Takeshi Oishi

PDF: Occlusion Handling using Semantic Segmentation and Visibility-Based Rendering for Mixed Reality

Abstract

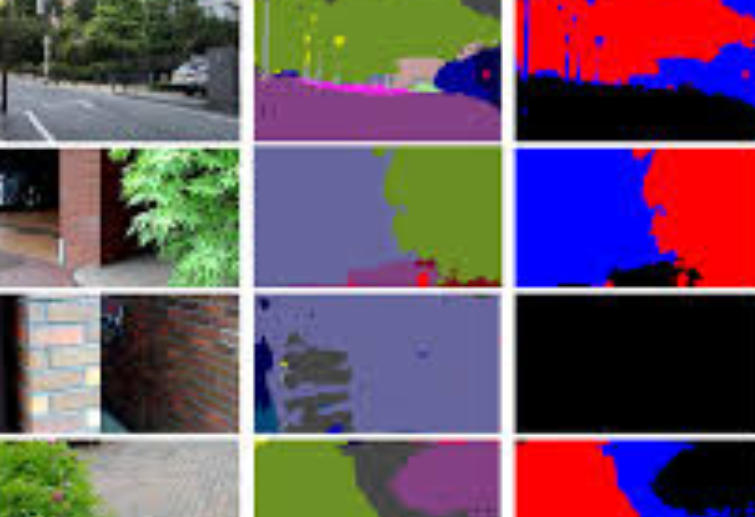

Real-time occlusion handling is a major problem in outdoor mixed reality system because it requires great computational cost mainly due to the complexity of the scene. Using only segmentation, it is difficult to accurately render a virtual object occluded by complex objects such as trees, bushes etc. In this paper, we propose a novel occlusion handling method for real-time, outdoor, and omni-directional mixed reality system using only the information from a monocular image sequence. We first present a semantic segmentation scheme for predicting the amount of visibility for different type of objects in the scene. We also simultaneously calculate a foreground probability map using depth estimation derived from optical flow. Finally, we combine the segmentation result and the probability map to render the computer generated object and the real scene using a visibility-based rendering method. Our results show great improvement in handling occlusions compared to existing blending based methods.