Perceptual deep depth super-resolution

PubDate: Sep 2019

Teams: 1Skolkovo Institute of Science and Technology, 2Higher School of Economics,3New York University

Writers: Oleg Voynov1;Alexey Artemov1; Vage Egiazarian1;Alexander Notchenko1;Gleb Bobrovskikh1,2;Denis Zorin3,1;Evgeny Burnaev1

PDF: Perceptual deep depth super-resolution

Abstract

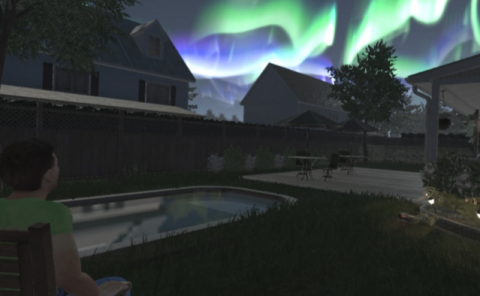

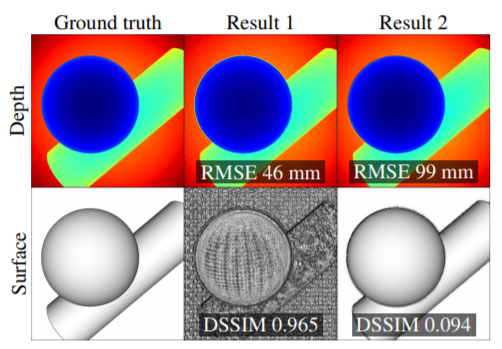

RGBD images, combining high-resolution color and lower-resolution depth from various types of depth sensors, are increasingly common. One can significantly improve the resolution of depth maps by taking advantage of color information; deep learning methods make combining color and depth information particularly easy. However, fusing these two sources of data may lead to a variety of artifacts. If depth maps are used to reconstruct 3D shapes, e.g., for virtual reality applications, the visual quality of upsampled images is particularly important. The main idea of our approach is to measure the quality of depth map upsampling using renderings of resulting 3D surfaces. We demonstrate that a simple visual appearance-based loss, when used with either a trained CNN or simply a deep prior, yields significantly improved 3D shapes, as measured by a number of existing perceptual metrics. We compare this approach with a number of existing optimization and learning-based techniques.