VRGym: A Virtual Testbed for Physical and Interactive AI

PubDate: Apr 2019

Teams: UCLA Center for Vision

Writers: Xu Xie, Hangxin Liu, Zhenliang Zhang, Yuxing Qiu, Feng Gao, Siyuan Qi, Yixin Zhu, Song-Chun Zhu

PDF: VRGym: A Virtual Testbed for Physical and Interactive AI

Abstract

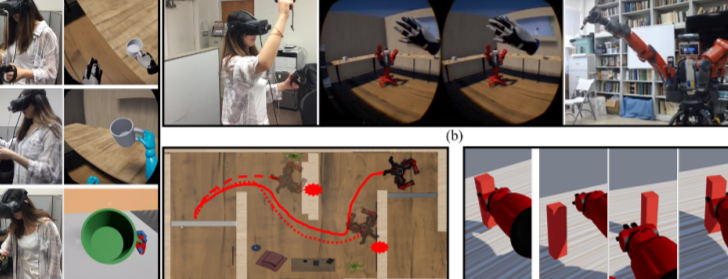

We propose VRGym, a virtual reality testbed for realistic human-robot interaction. Different from existing toolkits and virtual reality environments, the VRGym emphasizes on building and training both physical and interactive agents for robotics, machine learning, and cognitive science. VRGym leverages mechanisms that can generate diverse 3D scenes with high realism through physics-based simulation. We demonstrate that VRGym is able to (i) collect human interactions and fine manipulations, (ii) accommodate various robots with a ROS bridge, (iii) support experiments for human-robot interaction, and (iv) provide toolkits for training the state-of-the-art machine learning algorithms. We hope VRGym can help to advance general-purpose robotics and machine learning agents, as well as assisting human studies in the field of cognitive science.