Deep Multi Depth Panoramas for View Synthesis

PubDate: Aug 2020

Teams: UC San Diego, UC Berkeley, Adobe Research

Writers: Kai-En Lin, Zexiang Xu, Ben Mildenhall, Pratul P. Srinivasan, Yannick Hold-Geoffroy, Stephen DiVerdi, Qi Sun, Kalyan Sunkavalli, Ravi Ramamoorthi

PDF: Deep Multi Depth Panoramas for View Synthesis

Abstract

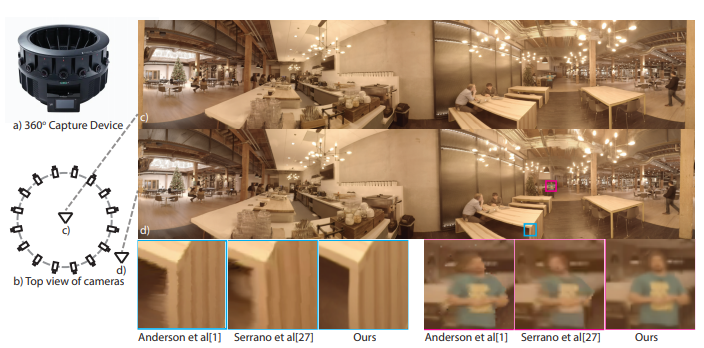

We propose a learning-based approach for novel view synthesis for multi-camera 360∘ panorama capture rigs. Previous work constructs RGBD panoramas from such data, allowing for view synthesis with small amounts of translation, but cannot handle the disocclusions and view-dependent effects that are caused by large translations. To address this issue, we present a novel scene representation - Multi Depth Panorama (MDP) - that consists of multiple RGBDα panoramas that represent both scene geometry and appearance. We demonstrate a deep neural network-based method to reconstruct MDPs from multi-camera 360∘ images. MDPs are more compact than previous 3D scene representations and enable high-quality, efficient new view rendering. We demonstrate this via experiments on both synthetic and real data and comparisons with previous state-of-the-art methods spanning both learning-based approaches and classical RGBD-based methods.