Expressive Telepresence via Modular Codec Avatars

PubDate: August, 2020

Teams: University of Toronto, Vector Institute, Facebook Reality Lab

Writers: Hang Chu, Shugao Ma, Fernando De la Torre, Sanja Fidler, Yaser Sheikh

PDF: Expressive Telepresence via Modular Codec Avatars

Abstract

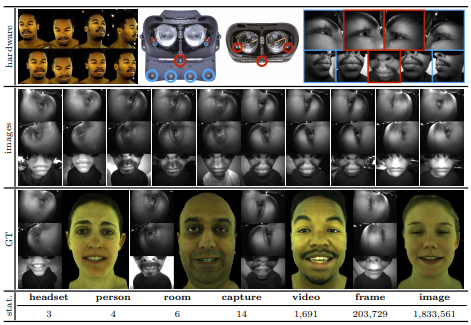

VR telepresence consists of interacting with another human in a virtual space represented by an avatar. Today most avatars are cartoon-like, but soon the technology will allow video-realistic ones. This paper aims in this direction, and presents Modular Codec Avatars (MCA), a method to generate hyper-realistic faces driven by the cameras in the VR headset. MCA extends traditional Codec Avatars (CA) by replacing the holistic models with a learned modular representation. It is important to note that traditional person-specific CAs are learned from few training samples, and typically lack robustness as well as limited expressiveness when transferring facial expressions. MCAs solve these issues by learning a modulated adaptive blending of different facial components as well as an exemplar-based latent alignment. We demonstrate that MCA achieves improved expressiveness and robustness w.r.t to CA in a variety of real-world datasets and practical scenarios. Finally, we showcase new applications in VR telepresence enabled by the proposed model.