Adaptive Generation of Phantom Limbs Using Visible Hierarchical Autoencoders

PubDate: Oct 2019

Teams: Central State University

Writers: Dakila Ledesma, Yu Liang, Dalei Wu

PDF: Adaptive Generation of Phantom Limbs Using Visible Hierarchical Autoencoders

Abstract

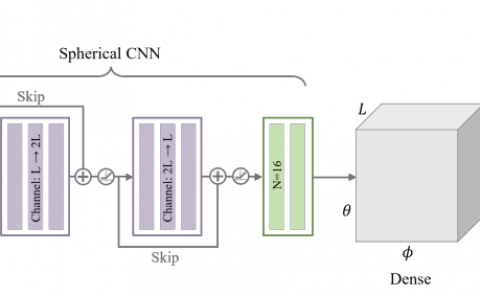

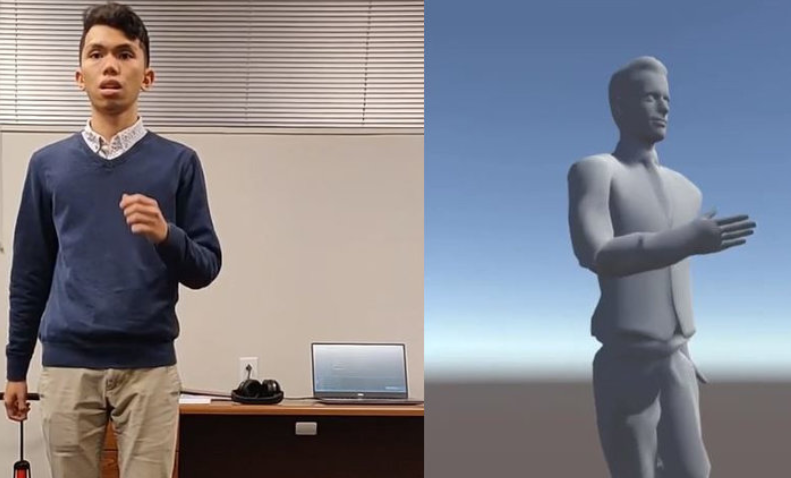

This paper proposed a hierarchical visible autoencoder in the adaptive phantom limbs generation according to the kinetic behavior of functional body-parts, which are measured by heterogeneous kinetic sensors. The proposed visible hierarchical autoencoder consists of interpretable and multi-correlated autoencoder pipelines, which is directly derived from the hierarchical network described in forest data-structure. According to specified kinetic script (e.g., dancing, running, etc.) and users’ physical conditions, hierarchical network is extracted from human musculoskeletal network, which is fabricated by multiple body components (e.g., muscle, bone, and joints, etc.) that are bio-mechanically, functionally, or nervously correlated with each other and exhibit mostly non-divergent kinetic behaviors. Multi-layer perceptron (MLP) regressor models, as well as several variations of autoencoder models, are investigated for the sequential generation of missing or dysfunctional limbs. The resulting kinematic behavior of phantom limbs will be constructed using virtual reality and augmented reality (VR/AR), actuators, and potentially controller for a prosthesis (an artificial device that replaces a missing body part). The addressed work aims to develop practical innovative exercise methods that (1) engage individuals at all ages, including those with a chronic health condition(s) and/or disability, in regular physical activities, (2) accelerate the rehabilitation of patients, and (3) release users’ phantom limb pain. The physiological and psychological impact of the addressed work will critically be assessed in future work.