Attack Trees for Security and Privacy in Social Virtual Reality Learning Environments

PubDate: Nov 2019

Teams: University of Missouri-Columbia,Webster University

Writers: Samaikya Valluripally, Aniket Gulhane, Reshmi Mitra, Khaza Anuarul Hoque, Prasad Calyam

PDF: Attack Trees for Security and Privacy in Social Virtual Reality Learning Environments

Abstract

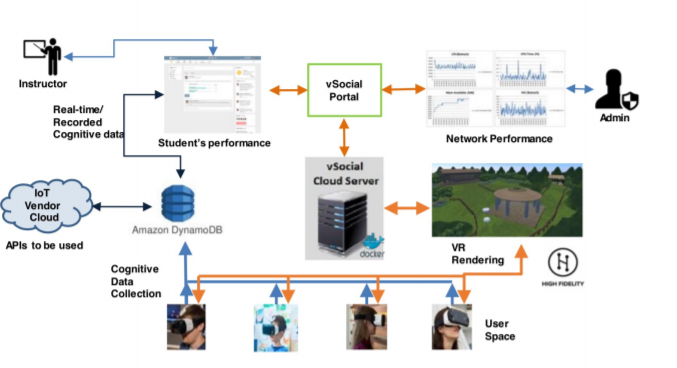

Social Virtual Reality Learning Environment (VRLE) is a novel edge computing platform for collaboration amongst distributed users. Given that VRLEs are used for critical applications (e.g., special education, public safety training), it is important to ensure security and privacy issues. In this paper, we present a novel framework to obtain quantitative assessments of threats and vulnerabilities for VRLEs. Based on the use cases from an actual social VRLE viz., vSocial, we first model the security and privacy using the attack trees. Subsequently, these attack trees are converted into stochastic timed automata representations that allow for rigorous statistical model checking. Such an analysis helps us adopt pertinent design principles such as hardening, diversity and principle of least privilege to enhance the resilience of social VRLEs. Through experiments in a vSocial case study, we demonstrate the effectiveness of our attack tree modeling with a reduction of 26% in probability of loss of integrity (security) and 80% in privacy leakage (privacy) in before and after scenarios pertaining to the adoption of the design principles.