GrabAR: Occlusion-aware Grabbing Virtual Objects in AR

PubDate: Jun 2020

Teams: The Chinese University of Hong Kong

Writers: Xiao Tang, Xiaowei Hu, Chi-Wing Fu, Daniel Cohen-Or

PDF: GrabAR: Occlusion-aware Grabbing Virtual Objects in AR

Abstract

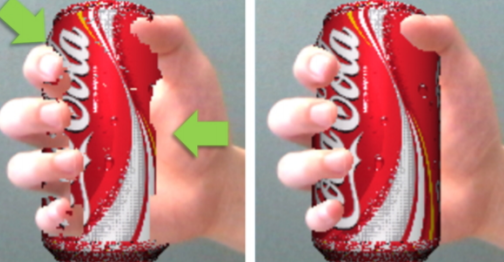

Existing augmented reality (AR) applications often ignore occlusion between real hands and virtual objects when incorporating virtual objects in our views. The challenges come from the lack of accurate depth and mismatch between real and virtual depth. This paper presents GrabAR, a new approach that directly predicts the real-and-virtual occlusion, and bypasses the depth acquisition and inference. Our goal is to enhance AR applications with interactions between hand (real) and grabbable objects (virtual). With paired images of hand and object as inputs, we formulate a neural network that learns to generate the occlusion mask. To train the network, we compile a synthetic dataset to pre-train it and a real dataset to fine-tune it, thus reducing the burden of manual labels and addressing the domain difference. Then, we embed the trained network in a prototyping AR system that supports hand grabbing of various virtual objects, demonstrate the system performance, both quantitatively and qualitatively, and showcase interaction scenarios, in which we can use bare hand to grab virtual objects and directly manipulate them.