Dataset for Eye Tracking on a Virtual Reality Platform

PubDate: June, 2020

Teams: University College London, Facebook Reality Labs, Google via Adecco

Writers: Stephan J. Garbin, Yiru Shen, Immo Schuetz, Robert Cavin, Gregory Hughes, Oleg V. Komogortsev, Sachin Talathi

PDF: Dataset for Eye Tracking on a Virtual Reality Platform

Abstract

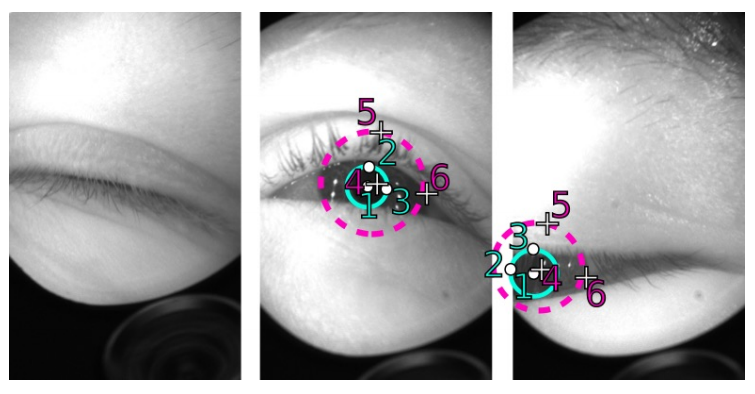

We present a large scale data set of eye-images captured using a virtual-reality (VR) head mounted display mounted with two synchronized eye-facing cameras at a frame rate of 200 Hz under controlled illumination. This dataset is compiled from video capture of the eye-region collected from 152 individual participants and is divided into four subsets: (i) 12,759 images with pixel-level annotations for key eye-regions: iris, pupil and sclera (ii) 252,690 unlabeled eye-images, (iii) 91,200 frames from randomly selected video sequences of 1.5 seconds in duration, and (iv) 143 pairs of left and right point cloud data compiled from corneal topography of eye regions collected from a subset, 143 out of 152, participants in the study. A baseline experiment has been evaluated on the dataset for the task of semantic segmentation of pupil, iris, sclera and background, with the mean intersection-over-union (mIoU) of 98.3 %. We anticipate that this dataset will create opportunities to researchers in the eye tracking community and the broader machine learning and computer vision community to advance the state of eye-tracking for VR applications, which in its turn will have greater implications in Human-Computer Interaction.