MatryODShka: Real-time 6DoF Video View Synthesis using Multi-Sphere Images

PubDate: Aug 2020

Teams: Brown University;Carnegie Mellon University;University of Bath

Writers: Benjamin Attal, Selena Ling, Aaron Gokaslan, Christian Richardt, James Tompkin

PDF: MatryODShka: Real-time 6DoF Video View Synthesis using Multi-Sphere Images

Abstract

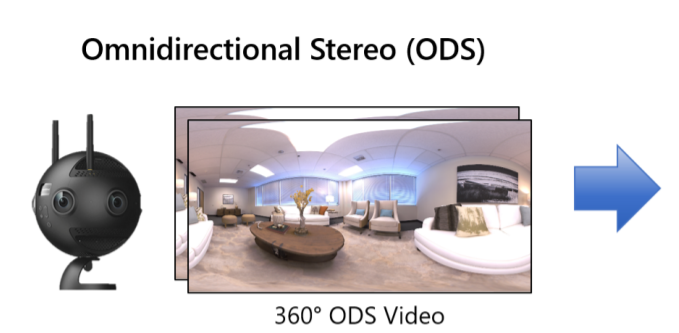

We introduce a method to convert stereo 360° (omnidirectional stereo) imagery into a layered, multi-sphere image representation for six degree-of-freedom (6DoF) rendering. Stereo 360° imagery can be captured from multi-camera systems for virtual reality (VR), but lacks motion parallax and correct-in-all-directions disparity cues. Together, these can quickly lead to VR sickness when viewing content. One solution is to try and generate a format suitable for 6DoF rendering, such as by estimating depth. However, this raises questions as to how to handle disoccluded regions in dynamic scenes. Our approach is to simultaneously learn depth and disocclusions via a multi-sphere image representation, which can be rendered with correct 6DoF disparity and motion parallax in VR. This significantly improves comfort for the viewer, and can be inferred and rendered in real time on modern GPU hardware. Together, these move towards making VR video a more comfortable immersive medium.