GANerated Hands for Real-Time 3D Hand Tracking from Monocular RGB

Title: GANerated Hands for Real-Time 3D Hand Tracking from Monocular RGB

Teams: 1Max Planck Institute for Informatics (GVV Group) 2Saarland Informatics Campus 3Stanford University 4Universidad Rey Juan Carlos

Writers: Franziska Mueller1,2 Florian Bernard1,2 Oleksandr Sotnychenko1,2 Dushyant Mehta1,2 Srinath Sridhar3 Dan Casas4 Christian Theobalt

Publication date: March 2018

Abstract

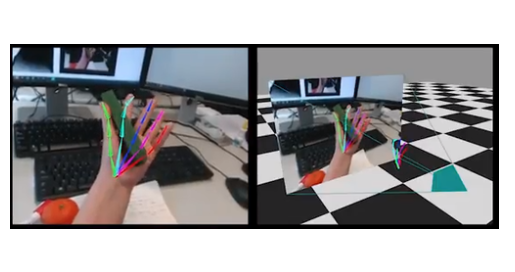

We address the highly challenging problem of real-time 3D hand tracking based on a monocular RGB-only sequence. Our tracking method combines a convolutional neural network with a kinematic 3D hand model, such that it generalizes well to unseen data, is robust to occlusions and varying camera viewpoints, and leads to anatomically plausible as well as temporally smooth hand motions. For training our CNN we propose a novel approach for the synthetic generation of training data that is based on a geometrically consistent image-to-image translation network. To be more specific, we use a neural network that translates synthetic images to “real” images, such that the so-generated images follow the same statistical distribution as real-world hand images. For training this translation network we combine an adversarial loss and a cycle-consistency loss with a geometric consistency loss in order to preserve geometric properties (such as hand pose) during translation. We demonstrate that our hand tracking system outperforms the current state-of-the-art on challenging RGB-only footage.