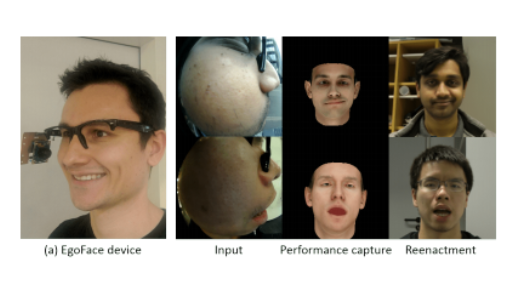

EgoFace: Egocentric Face Performance Capture and Videorealistic Reenactment

Title: EgoFace: Egocentric Face Performance Capture and Videorealistic Reenactment

Teams: Hamad Bin Khalifa University

Writers: Mohamed Elgharib, Mallikarjun BR, Ayush Tewari, Hyeongwoo Kim, Wentao Liu, Hans-Peter Seidel, Christian Theobalt

Publication date: May 2019

Abstract

ace performance capture and reenactment techniques use multiple cameras and sensors, positioned at a distance from the face or mounted on heavy wearable devices. This limits their applications in mobile and outdoor environments. We present EgoFace, a radically new lightweight setup for face performance capture and front-view videorealistic reenactment using a single egocentric RGB camera. Our lightweight setup allows operations in uncontrolled environments, and lends itself to telepresence applications such as video-conferencing from dynamic environments. The input image is projected into a low dimensional latent space of the facial expression parameters. Through careful adversarial training of the parameter-space synthetic rendering, a videorealistic animation is produced. Our problem is challenging as the human visual system is sensitive to the smallest face irregularities that could occur in the final results. This sensitivity is even stronger for video results. Our solution is trained in a pre-processing stage, through a supervised manner without manual annotations. EgoFace captures a wide variety of facial expressions, including mouth movements and asymmetrical expressions. It works under varying illuminations, background, movements, handles people from different ethnicities and can operate in real time.