Simulating Movement Interactions Between Avatars & Agents in Virtual Worlds Using Human Motion Constraints

PubDate: August 2018

Teams: University of North Carolina

Writers: Sahil Narang; Andrew Best; Dinesh Manocha

Abstract

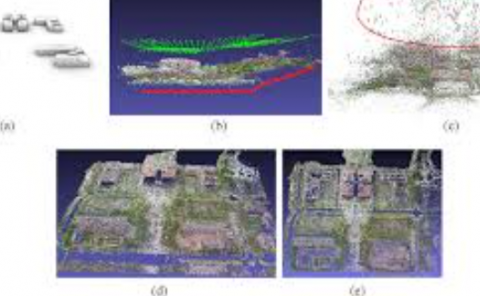

We present an interactive algorithm to generate plausible movements for human-like agents interacting with other agents or avatars in a virtual environment. Our approach takes into account high-dimensional human motion constraints and bio-mechanical constraints to compute collision-free trajectories for each agent. We present a novel full-body movement constrained-velocity computation algorithm that can easily be combined with many existing motion synthesis techniques. Compared to prior local navigation methods, our formulation reduces artefacts that arise in dense scenarios and close interactions, and results in smoother and plausible locomotive behaviors. We have evaluated the benefits of our new algorithm in single-agent and multi-agent environments. We investigated the perception of a single agent’s movements in dense scenarios and observed that our algorithm has a strong positive effect on the perceived quality of the simulation. Our approach also allows the user to interact with the agents from a first-person perspective in immersive settings. We conducted a study to investigate the perception of such avatar-agent interactions, and found that interactions generated using our approach lead to an increase in the user’s sense of co-presence.