Towards a Real-Time Environment Reconstruction for VR-Based Teleoperation Through Model Segmentation

PubDate: January 2019

Teams: Autonomous Systems (ICTA)Framatome GmbH;Karlsruhe Institute of Technology (KIT);Institute for Factory Automation and Production Systems (FAPS)

Writers: Sebastian Kohn; Andreas Blank; David Puljiz; Lothar Zenkel; Oswald Bieber; Bjorn Hein; Jorg Franke

PDF: Towards a Real-Time Environment Reconstruction for VR-Based Teleoperation Through Model Segmentation

Abstract

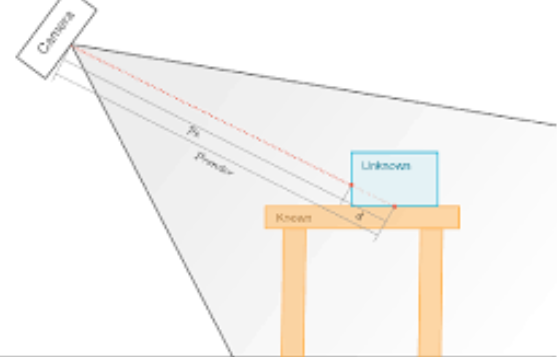

Over the next few years, more and more autonomous mobile robot systems will find their way into modern shop floors. However, it will be necessary to provide human-machine interfaces for interventions in unexpected situations like system-deadlocks, algorithm failures or inabilities. Using virtual or mixed reality-technologies, multi-modal teleoperation offers potential for being a suitable human-machine interface. Essential challenges in this field are, among others, a real-time remote control, a time-efficient and holistic environment detection using multiple sensors, a noise-reduced visualization of sensor-data, and capabilities of object recognition. This paper summarizes research results regarding an architecture capable of a near realtime, interoperable, and operator-supporting teleoperation. The focus of this paper is on a method to efficiently process and visualize point-clouds to meet high frame rate demands of virtual reality applications. To provide near real-time feedback of the robot and its environment over large distances, the presented method is capable to segment known objects from unknown objects to reduce bandwidth requirements. The results of this paper were evaluated using a industrial articulated robotic arm for teleoperation via a long distance UDP/IP communication.