Implementation and Evaluation of a 50 kHz, 28μs Motion-to-Pose Latency Head Tracking Instrument

PubDate: March 2019

Teams: UNC-Chapel Hill;The University of Central Florida;TWI Research

Writers: Alex Blate; Mary Whitton; Montek Singh; Greg Welch; Andrei State; Turner Whitted; Henry Fuchs

PDF: Implementation and Evaluation of a 50 kHz, 28μs Motion-to-Pose Latency Head Tracking Instrument

Abstract

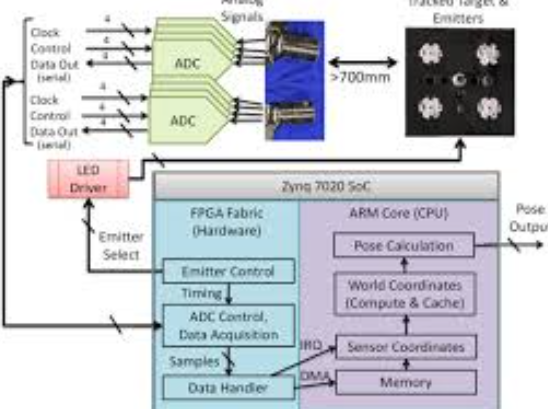

This paper presents the implementation and evaluation of a 50,000-pose-sample-per-second, 6-degree-of-freedom optical head tracking instrument with motion-to-pose latency of 28μs and dynamic precision of 1–2 arcminutes. The instrument uses high-intensity infrared emitters and two duo-lateral photodiode-based optical sensors to triangulate pose. This instrument serves two purposes: it is the first step towards the requisite head tracking component in sub- 100μs motion-to-photon latency optical see-through augmented reality (OST AR) head-mounted display (HMD) systems; and it enables new avenues of research into human visual perception – including measuring the thresholds for perceptible real-virtual displacement during head rotation and other human research requiring high-sample-rate motion tracking. The instrument’s tracking volume is limited to about 120×120×250 but allows for the full range of natural head rotation and is sufficient for research involving seated users. We discuss how the instrument’s tracking volume is scalable in multiple ways and some of the trade-offs involved therein. Finally, we introduce a novel laser-pointer-based measurement technique for assessing the instrument’s tracking latency and repeatability. We show that the instrument’s motion-to-pose latency is 28μs and that it is repeatable within 1–2 arcminutes at mean rotational velocities (yaw) in excess of 500°/sec.