Rotational motion model for temporal prediction in 360 video coding

PubDate: December 2017

Teams: University of California

Writers: Bharath Vishwanath; Tejaswi Nanjundaswamy; Kenneth Rose

PDF: Rotational motion model for temporal prediction in 360 video coding

Abstract

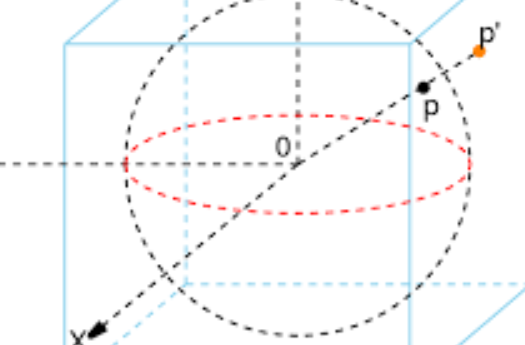

The recent boom in the field of virtual and augmented reality has dramatically increased the prevalence of spherical video. Given the enormous amount of data consumed by spherical video, it is critical to achieve efficient compression for storage and transmission. Prevalent approaches simply project (via different geometries) the spherical video onto planes for processing with traditional 2D video coding standards. However, such approaches are significantly sub-optimal as standard video coders only allow for block translations in the critical tool of motion compensated prediction, which is incompatible with the expected motion in projected spherical video. Specifically, the effective sampling density varies over the sphere and the resulting locally varying warping yields complex non-linear motion in the projected domain. Hence, translation in the projected domain does not preserve an object’s shape and size on the sphere, and its corresponding motion vector does not have a useful physical interpretation. Instead, we propose to characterize the motion directly on the sphere with a rotational motion model, specifically, in terms of sphere rotations along geodesics. This model preserves object shape and size on the sphere. A motion vector in this model implicitly specifies an axis of rotation and the degree of rotation about that axis, to convey the actual motion of objects on the sphere. Complementary to the novel motion model, we further propose an effective motion search technique that is tailored to the sphere’s geometry. Experimental results demonstrate that the proposed framework achieves significant gains over prevalent motion models, across various projection geometries.