Low-Latency Rendering With Dataflow Architectures

PubDate: April 2020

Teams: University College London

Writers: Sebastian Friston

PDF: Low-Latency Rendering With Dataflow Architectures

Abstract

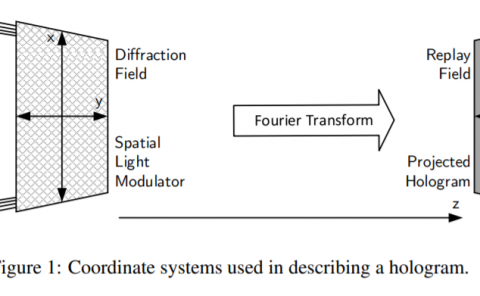

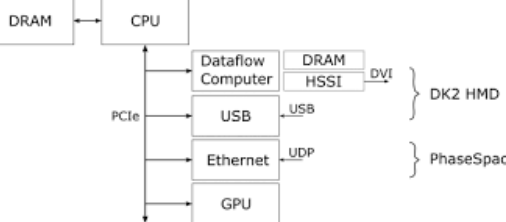

Recent years have seen a resurgence of virtual reality (VR), sparked by the repurposing of low-cost COTS components. VR aims to generate stimuli that appear to come from a source other than the interface through which they are delivered. The synthetic stimuli replace real-world stimuli, and transport the user to another, perhaps imaginary, “place.” To do this, we must overcome many challenges, often related to matching the synthetic stimuli to the expectations and behavior of the real world. One way in which the stimuli can fail is its latency– the time between a user’s action and the computer’s response. We constructed a novel VR renderer, that optimized latency above all else. Our prototype allowed us to explore how latency affects human-computer interaction. We had to completely reconsider the interaction between time, space, and synchronization on displays and in the traditional graphics pipeline. Using a specialized architecture–dataflow computing–we combined consumer, industrial, and prototype components to create an integrated 1:1 room-scale VR system with a latency of under 3 ms. While this was prototype hardware, the considerations in achieving this performance inform the design of future VR pipelines, and our human factors studies have provided new and sometimes surprising contributions to the body of knowledge on latency in HCI.