3D Human Reconstruction from an Image for Mobile Telepresence Systems

PubDate: May 2020

Teams: The University of Tokyo

Writers: Yuki Takeda; Akira Matsuda; Jun Rekimoto

PDF: 3D Human Reconstruction from an Image for Mobile Telepresence Systems

Abstract

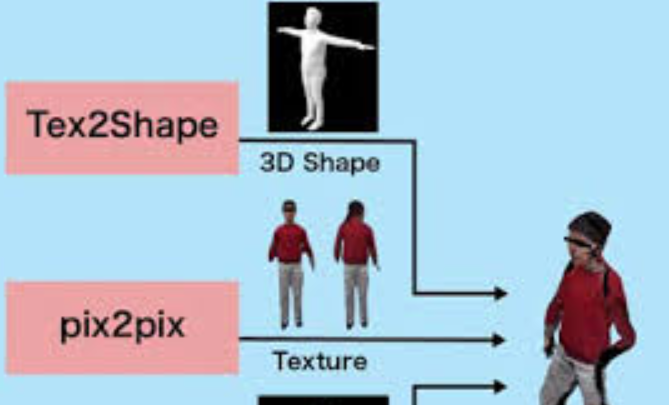

In remote collaboration using a telepresence system, viewing a 3D-reconstructed workspace can help a person understand the workspace situation. This is because, by viewing the workspace from birds-eye view, it is possible to both understand the positional relationship between the workspace and the worker and see the movements of workers. However, when reconstructing the workspace in 3D using depth cameras mounted on a telepresence robot or worker, the back of workers or objects in front of the depth cameras becomes a blind spot and cannot be captured. Therefore, when a person in a remote location views the 3D-reconstructed workspace, the person cannot see workers or objects in the blind spot. This makes it difficult to know the positional relationship between the workspace and the worker and to see the movement of the workers. Here, we propose a method to reduce the blind spots of a 3D-reconstructed person by reconstructing a 3D model of the person from an RGB-D image taken with a depth camera and changing the pose of the 3D model according to the movement of the persons body.