Video Saliency Detection via Spatial-Temporal Fusion and Low-Rank Coherency Diffusion

PubDate: February 2017

Teams: Beihang University;Stony Brook University

Writers: Chenglizhao Chen; Shuai Li; Yongguang Wang; Hong Qin; Aimin Hao

PDF: Video Saliency Detection via Spatial-Temporal Fusion and Low-Rank Coherency Diffusion

Abstract

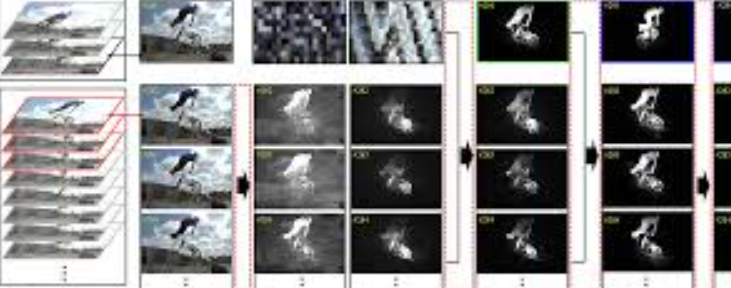

This paper advocates a novel video saliency detection method based on the spatial-temporal saliency fusion and low-rank coherency guided saliency diffusion. In sharp contrast to the conventional methods, which conduct saliency detection locally in a frame-by-frame way and could easily give rise to incorrect low-level saliency map, in order to overcome the existing difficulties, this paper proposes to fuse the color saliency based on global motion clues in a batch-wise fashion. And we also propose low-rank coherency guided spatial-temporal saliency diffusion to guarantee the temporal smoothness of saliency maps. Meanwhile, a series of saliency boosting strategies are designed to further improve the saliency accuracy. First, the original long-term video sequence is equally segmented into many short-term frame batches, and the motion clues of the individual video batch are integrated and diffused temporally to facilitate the computation of color saliency. Then, based on the obtained saliency clues, inter-batch saliency priors are modeled to guide the low-level saliency fusion. After that, both the raw color information and the fused low-level saliency are regarded as the low-rank coherency clues, which are employed to guide the spatial-temporal saliency diffusion with the help of an additional permutation matrix serving as the alternative rank selection strategy. Thus, it could guarantee the robustness of the saliency map’s temporal consistence, and further boost the accuracy of the computed saliency map. Moreover, we conduct extensive experiments on five public available benchmarks, and make comprehensive, quantitative evaluations between our method and 16 state-of-the-art techniques. All the results demonstrate the superiority of our method in accuracy, reliability, robustness, and versatility.