CubeMap360: Interactive Global Illumination for Augmented Reality in Dynamic Environment

PubDate: March 2020

Teams: Purdue University;University of Tabuk

Writers: A’aeshah Alhakamy; Mihran Tuceryan

PDF: CubeMap360: Interactive Global Illumination for Augmented Reality in Dynamic Environment

Abstract

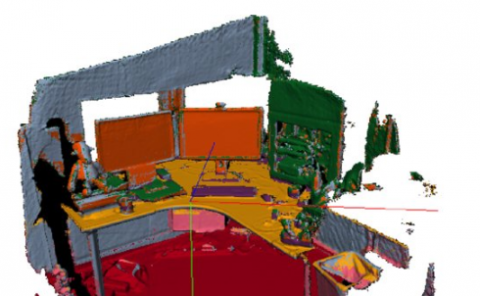

Most augmented/mixed reality (AR/MR) applications still have shortcomings when integrating virtual objects correctly in real scenes such that virtual objects are indistinguishable from reality. While the entertainment industry has accomplished incredible results in every known form of media, the main process is usually achieved offline. An interactive extraction of illumination information from the physical scene used to render virtual objects would provide a realistic outcome for AR/MR applications. In this paper, we present a system that captures the existing physical scene illumination, then apply it to the rendering of virtual objects added to a scene for augmented reality applications. The illumination model in the developed system addresses some components that work simultaneously at the run time. The main component estimates the direction of the incident light (direct illumination) of the real-world scene through a 360° live camera attached to AR devices using computer vision techniques. A complementary component simulates the reflected light (indirect illumination) from surfaces in the rendering of virtual objects using cube maps textures. Then, the shading materials of each virtual object are defined which provide the proper lighting and shadowing effects including the two previous components. In order to ensure that our system provides a valid result, we evaluate the error incurred between the real and the virtual illuminations produced in the final scene based on the shadow allocation which is emphasized while conducting a user study.