Pixels and Panoramas: An Enhanced Cubic Mapping Scheme for Video/Image-Based Virtual-Reality Scenes

PubDate: February 2019

Teams: Fudan University

Writers: Yibo Fan; Yize Jin; Zihao Meng; Xiaoyang Zeng

PDF: Pixels and Panoramas: An Enhanced Cubic Mapping Scheme for Video\/Image-Based Virtual-Reality Scenes

Abstract

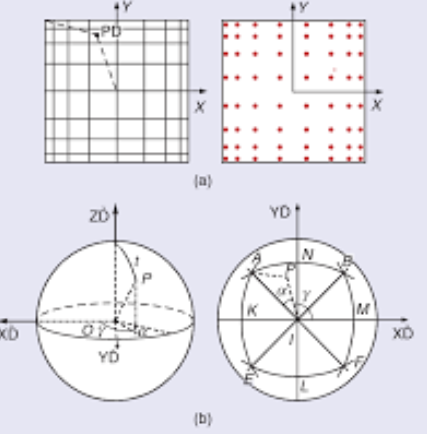

Virtual reality (VR) is rapidly appearing in various fields, such as navigation, robotics, and documentation. Spherical panoramic video, compared to 3D modeling, provides immersive and omnidirectional views in a much more convenient way. However, state-of-the-art video or image-encoding techniques, such as high-efficiency video coding or JPEG, require rectangular input sequences. Spherical videos are traditionally projected onto a plane or a cube for convenient encoding, but mapping quality and encoding efficiency are not considered. In this article, we propose GVScube projection, a method using a cube-Snyder (Scube) projection along with a gradually varied (GV) sampling method to generate panoramic video. This method achieves better pixel uniformity and less area deviation than other methods.